Most enterprise AI failures in 2025–2026 are not technology failures. They are governance failures. The model worked. The pilot looked promising. But no one had a clear answer to who owns this in production, what counts as an acceptable error rate, who reviews the data the agent acts on, or what triggers a rollback. AI governance is the operating answer to those questions — the set of decision rights, accountability structures, and review cadences that determine how an organization explores, adopts, scales, and retires AI systems.

This page explains what AI governance is, the four decisions it forces every enterprise to make, the cross-functional system that makes governance work, and the minimum viable operating cadence to start governing AI without slowing innovation.

What Is AI Governance?

AI governance is the set of decision rights, accountability structures, and operating cadences that determine how an organization explores, adopts, scales, and retires AI systems. It is the answer to who decides, who is accountable, and how often we review. Done well, AI governance accelerates adoption by removing ambiguity. Done poorly, it becomes governance theater that slows everything down without preventing the failures it was created to catch.

What AI governance is not: a document that sits on SharePoint and is referenced once a quarter, a list of forbidden tools, an IT policy, an ethics review board that meets after a deployment is live, or something that can be outsourced to an external consulting firm and then ignored.

What AI governance is: a small, named set of decisions that get reviewed on a fixed cadence by people who have the authority to stop, fund, redirect, or scale a given AI initiative. It is the operating layer that connects organizational AI Readiness to durable AI Leadership — and the precondition for safely deploying Agentic AI at scale.

The Four Governance Decisions AI Forces

Every enterprise AI initiative — pilot, production, agentic, or embedded SaaS feature — eventually requires four decisions. Most organizations make them by accident, late, and inconsistently. The work of AI governance is to make them deliberately, early, and uniformly.

1. Who is accountable when the AI is wrong?

Not who runs the model. Who answers to the customer, the regulator, or the board when an output causes harm. Accountability cannot be delegated to a vendor, an LLM provider, or “the system.” Organizations can delegate work to agents, but they cannot delegate responsibility. Naming the accountable person — by role, not by name — is the first governance decision.

2. What level of autonomy is acceptable for this workload?

3. What triggers a pause, rollback, or kill?

4. Who reviews the system and how often?

AI Governance Is Not an IT Initiative — It’s a Cross-Functional System

A common AI governance failure is treating it as an IT initiative. IT cannot be the HR department for AI agents — and HR cannot be the platform team. Each function owns a piece, and the governance owner’s job is to keep the seams from becoming gaps.

IT and the AI team

Own platform health, model lifecycle, security posture, integration boundaries, and the technical kill switches. Accountable for system health, not workforce impact and not regulatory exposure.

Human Resources

Owns workforce impact: which roles change, how skills are developed, how performance management evolves when AI handles part of the work, and how trust is rebuilt when a deployment displaces tasks people previously owned.

The business unit

Owns workload selection, accountable-person naming, acceptance criteria, and the operational cost-of-error tolerance for its own decisions. Governance cannot be done to a business unit — it has to be done with the unit that owns the workflow.

Legal and compliance

Own regulatory mapping (sectoral rules, data residency, sector-specific AI acts), contract terms with vendors, and the disclosure obligations attached to specific use cases. The faster the regulatory landscape moves, the more this seat earns its place.

The governance owner does not do any of this work. They convene the people who do it, hold the cadence, and resolve the conflicts that arise at the boundaries — which is most of them.

The Minimum Viable AI Governance Operating Cadence

You do not need a 60-page framework to start governing AI. You need three named roles, two recurring meetings, and one decision log. Everything else is optimization.

Three named roles

A governance owner (typically a senior business leader, not IT) accountable for the cadence and for unblocking decisions. A technical owner (CIO, CTO, or AI lead) accountable for system health, security, and lifecycle. A risk owner (legal, compliance, or risk management depending on industry) accountable for regulatory exposure and acceptable-error policy.

Two recurring meetings

A monthly review of every AI workload in production, going through the four decisions for each: accountable person named, autonomy level documented, pause triggers active, review cadence confirmed. A quarterly portfolio review with executive sponsors: which initiatives are scaling, which are killed, which budget is reallocated, what the workforce-impact picture looks like.

One decision log

A single, durable record — a wiki page, a tracked document, a small dashboard — capturing every governance decision: what was decided, by whom, on what date, and what the trigger for revisiting is. The decision log is the artifact that survives turnover and the artifact regulators and auditors will eventually ask to see.

This is the floor. Mature organizations layer on workload risk tiers, formal acceptance testing, model registries, and red-team programs. But every governance program that works in practice has these three pieces underneath, and most that fail in practice are missing one of them.

Related Articles on AI Governance

-

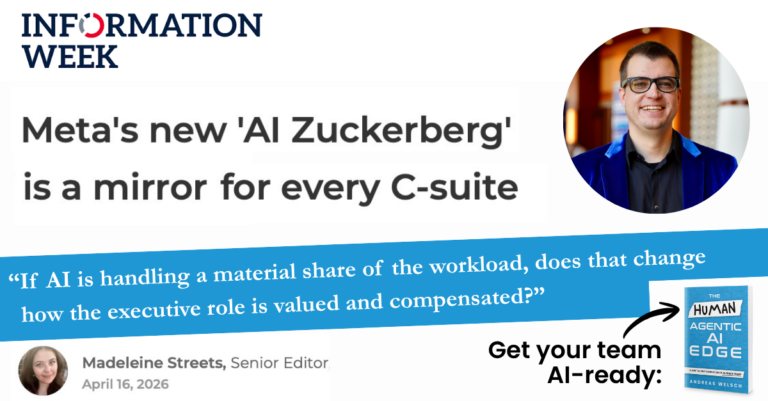

AI Leadership in the Age of Executive Digital Twins: What “AI Zuckerberg” Signals for the C-Suite

Assess what “AI Zuckerberg” signals for AI leadership: digital twins, drift risk, trust impacts,…

-

Why Governance Is Critical For AI Innovation

Build AI governance that accelerates adoption without chaos. Key lessons on policy, training, measurement,…

-

Agentic AI for Process Excellence: Scale Automation Without Losing Accountability

Strengthen process excellence with Agentic AI using clear roles, guardrails, escalation thresholds, and ownership…

-

Why Agentic AI Won’t Bring The “SaaSpocalypse” Overnight

Andreas Welsch explains how agentic AI reshapes Enterprise SaaS: disruption risk, outcome-based pricing, governance,…

-

Avoiding “AI Workslop” and Designing Accountable Work

Avoid AI work slop by redesigning work, decision rights, and governance as agentic AI…

-

Agentic AI and the Human Edge

Agentic AI adoption, shadow AI risks, human-in-the-loop governance, and the four A’s for accountable…

-

Agentic AI: Practical Strategies for Scaling, Governance, and Workforce Adoption

Agentic AI is moving beyond proof-of-concept pilots into operational deployments.

-

AI Leadership: How Executives Boost Potential With AI (Without Workslop)

Boost AI outcomes beyond email drafts by preventing AI workslop, building champions networks, and…

-

Agentic AI Governance: How Leaders Can Prevent “Agent Slop” From Becoming a Productivity Crisis

Learn how agent slop emerges with AI agents and how leaders can reduce risk…

-

AI Leadership in Practice: Governance, “AI Slop,” and What Comes Next

Learn practical AI leadership tactics: lightweight governance, preventing AI slop, managing tool sprawl, and…

-

Practical Adoption, Governance, and Measurable Impact for Agentic AI

What is Agentic AI really, how to avoid agent washing, and how can leaders…

-

AI Leadership: How to Bring AI Into the Business Without the Hype

Andreas Welsch explains how AI leadership drives strategy-first adoption, scalable pilots, data readiness, and…

From AI Governance Theory to Executive Practice

Read the Handbook

The AI Leadership Handbook is Andreas Welsch’s first best-selling book — a practical guide to introducing AI into your organization, designing governance that accelerates rather than blocks, and keeping humans at the center of AI use. Based on interviews with 60+ AI leaders and experts.

Read the AI Leadership HandbookBuild Leadership Capability

The Certified AI Leader™ Program is a four-tier curriculum (AI Explorer, AI Strategist, AI Innovator, AI Visionary) that builds AI governance capability across your organization — from first-line managers through the C-suite. Every cohort includes a capstone project applied to a real governance problem in your business.

Explore the Certified AI Leader ProgramGet Senior-Level Advisory

AI Advisory Services help enterprise leaders design and operate the AI governance system: naming the accountable roles, setting the cadence, defining kill triggers, and sequencing remediation when audits expose gaps. Advised by 2x best-selling AI author Andreas Welsch with frameworks proven at Fortune 500 scale.

Book an AI Governance Discovery CallBring Andreas to Your Event

Keynotes and executive panels on AI governance, agentic AI, and the workforce shifts AI is producing. Past audiences include Fortune 500 executive teams, industry conferences, and corporate leadership events.

Inquire About Speaking