Prepare for More Autonomous Work in the Era of Agentic AI

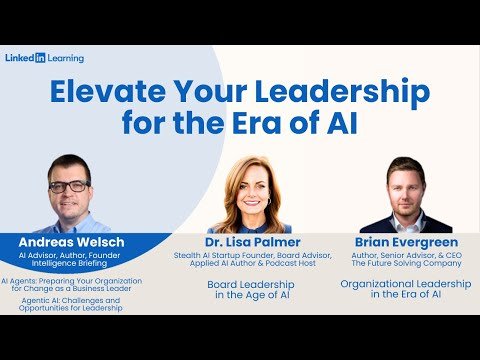

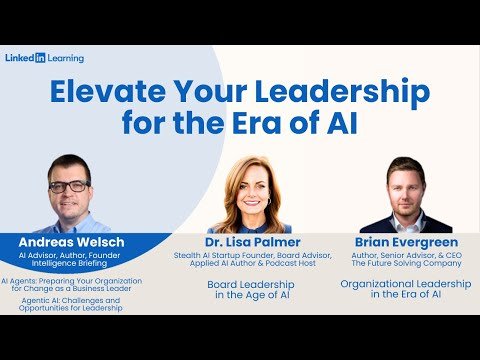

AI leadership is shifting quickly as agentic AI moves from experimentation to capabilities embedded in commercial products. In a recent conversation hosted by Andreas Welsch, leaders compared notes on what this means for governance, strategy, and how work gets done when software can act with greater autonomy.

The discussion brought together board, executive, and operational perspectives: Dr. Lisa Palmer (board leadership in the age of AI), Brian Evergreen (organizational leadership in the era of AI), and Welsch (agentic AI and how organizations prepare). The throughline was practical: leaders need hands-on familiarity and a clear approach to change management.

Welsch, an AI leadership expert, emphasized that the biggest change is a new working paradigm: shifting from telling software exactly what to do toward giving systems goals and supervising iterative outcomes. That shift creates opportunity—but also a new responsibility for leaders to ensure quality, trust, and ethical use.

Executive Summary

- Agentic AI changes work from task execution to goal-driven iteration.

- Hands-on use by executives accelerates strategic clarity and adoption.

- Multi-agent “virtual teams” are emerging inside business functions.

- Human-in-the-loop review remains essential for trust and usefulness.

- Enablement requires training plus internal communities that create multipliers.

Key Takeaways

- Andreas Welsch highlights a paradigm shift: leaders can increasingly give AI a goal, not just instructions.

- Welsch recommends hands-on experimentation, because vendors are embedding agentic capabilities into products.

- Welsch points to customer service as an early, high-impact domain for agentic AI (triage, retrieval, drafting, and supervised sending).

- Welsch stresses that usefulness and trust depend on people being able to assess whether AI output is correct and complete.

- Welsch describes workforce enablement as foundational training plus hands-on exercises and internal “multipliers.”

- Welsch anticipates “virtual teams” of agents that collaborate within and across departments, and eventually across companies.

- Welsch urges leaders at every level to build AI literacy now to navigate increasing autonomy responsibly.

What is AI leadership?

AI leadership is the executive capability to guide an organization’s strategy, governance, and workforce through AI-driven change—especially when systems become more autonomous. In this conversation, Andreas Welsch frames AI leadership as combining practical experimentation with clear oversight: leaders must understand how AI tools work in real workflows, set goals and guardrails, and ensure people can validate outputs before decisions or communications are finalized.

Why this conversation matters

This article is based on a leadership conversation hosted by Andreas Welsch focused on elevating leadership in the era of AI. The audience was business leaders looking for tangible guidance: where agentic AI fits, what changes in day-to-day work, and how leaders can prepare teams for new levels of autonomy.

The discussion is especially relevant because agentic AI is no longer a distant concept. Welsch notes that early agentic frameworks emerged recently, and within roughly a year, major vendors began embedding these capabilities into products—creating pressure on leaders to move from curiosity to operational readiness.

Key Insight: As agentic AI becomes easier to access, AI leadership becomes less about “knowing the buzzwords” and more about shaping how people and AI systems work together—setting goals, reviewing outcomes, and building confidence in what gets shipped to customers, employees, and stakeholders.

AI leadership starts with hands-on familiarity—because access is democratized

Welsch and Dr. Palmer both emphasize hands-on engagement, including at senior levels. The reason is simple: access to AI tools is increasingly democratized. Leaders can use many tools directly (often as apps), which accelerates understanding of what is possible—and what is risky.

Welsch argues that this accessibility changes expectations inside organizations. When employees and peers experiment, boards and executives naturally ask: What is the AI strategy? What should be deployed? What policies and practices ensure reliable outcomes?

Hands-on learning is not positioned as optional experimentation; it is positioned as a practical way to reduce ambiguity. Leaders who experience AI capabilities firsthand are better equipped to identify high-value workflows and ask sharper governance questions.

Key Insight: Welsch’s view is that AI leadership now requires direct exposure to tools and workflows. When anyone can access AI, executive decision-making cannot rely solely on secondhand summaries. Hands-on experience widens the “aperture of the possible” and improves judgment about where autonomy helps—and where supervision is required.

From deterministic software to agentic AI: a paradigm shift toward goals

Welsch describes a shift in how work is delegated to software. Historically, software was used for specific tasks with explicit instructions. With agents, leaders and teams increasingly provide a goal (for example, producing a marketing brief), and the system iterates through steps to assemble an outcome.

In Welsch’s example, one agent might generate personas, another drafts copy, a third supports creative elements, and results are combined into a brief a marketer reviews and adjusts. This creates a back-and-forth pattern that differs from earlier automation.

The leadership challenge is not only technical feasibility. It is operational: defining goals, ensuring the right information sources are used, and designing review checkpoints so outputs are trustworthy before they are acted on.

Key Insight: Welsch positions agentic AI as a new working paradigm: AI can be assigned goals and produce iterative drafts, not just execute predetermined steps. That makes supervision and review a core leadership responsibility—similar to managing human teams—because leaders must judge whether interim results are accurate, complete, and ready to use.

Early applications: customer service as a high-volume, repeatable domain

Welsch points to customer service as a practical starting point. Many organizations receive a large share of recurring questions (for example, common IT requests like password resets, or repeat product troubleshooting questions).

In the scenario described, an agent first identifies what a customer is asking, then consults a knowledge base or product documentation, and finally proposes a response. A human customer service representative can review the suggested answer, edit it, and send it—maintaining a human-in-the-loop approach.

Welsch notes that multi-agent setups may include a supervising or “critiquing” agent that checks tone, length, and style before a response is finalized. The objective is not to remove people from the workflow; it is to reduce manual lookup and drafting time while preserving accountability.

Key Insight: Customer service illustrates how AI leadership translates into workflow design. Welsch emphasizes pairing agents with supervision: one agent can triage and retrieve, another can draft, and a reviewer (human and/or supervisory agent) can ensure the output matches the brand voice and resolves the customer’s real problem.

Workforce enablement: from prompt training to communities of practice

Welsch observes that many large organizations started with basic prompt engineering training as an entry point to generative AI productivity. He frames this as a useful start, but not the end of enablement.

Drawing on earlier enterprise experience, Welsch describes an enablement pattern: build foundational understanding (what AI and machine learning are, and now what agentic AI is), run hands-on exercises, and create a community of practice that brings together product managers, engineers, and architects.

Crucially, teams want time for dialogue and exchange—what worked, what did not, and where opportunities exist. Welsch highlights the importance of early adopters becoming “multipliers” who advocate and coach others, accelerating adoption while keeping learning grounded in real work.

Key Insight: Welsch links sustainable AI adoption to structured enablement. Foundational training and hands-on practice create literacy, but communities of practice turn learning into shared operating knowledge. The multiplier effect—early adopters coaching peers—helps scale agentic AI responsibly across functions without relying on a small expert group.

Human-in-the-loop oversight: usefulness, trust, and quality control

Welsch underlines a practical risk: even when AI accelerates work, leaders and employees still need the skill to assess outputs. The central question becomes: Is the result correct, true, comprehensive, and appropriate before it is passed on?

This mirrors a familiar leadership pattern. A leader assigns work, receives an interim result, and evaluates whether the output is usable or needs additional considerations. Welsch argues the same mindset applies to agentic workflows, particularly as autonomy increases.

Dr. Palmer also reinforces the importance of human-plus-AI partnership and continued attention to bias and responsible design. Taken together, the conversation frames governance not as a separate exercise but as embedded in how work is reviewed and approved.

From digital transformation to autonomous transformation

Welsch suggests a directional shift: organizations may be moving from digital transformation toward autonomous transformation. His reasoning stems from how agentic AI changes the nature of work—especially when multiple agents coordinate to complete tasks with minimal human prompting.

In Welsch’s view, the evolution begins with single-purpose agents, then expands into teams of agents within a function (for example, marketing). Over time, agent “teams” may collaborate across departments, such as marketing agents interacting with finance agents for budget alignment and approvals.

Looking further ahead, Welsch anticipates cross-company coordination where agent systems negotiate or optimize processes between organizations—effectively “agent-to-agent” collaboration at ecosystem scale.

Leadership Implications

Based on Andreas Welsch’s guidance in this discussion, several leadership actions stand out for executives shaping AI governance, strategy, and adoption.

- Institutionalize hands-on AI literacy: Ensure leaders and teams use AI tools directly to build informed judgment.

- Design human-in-the-loop workflows: Keep review checkpoints so AI outputs are verified before decisions or customer communications.

- Start with repeatable, high-volume domains: Customer service is a practical entry point for agentic AI support and supervised drafting.

- Build enablement beyond training: Combine foundational education, hands-on exercises, and communities of practice that share what works.

- Plan for autonomy as a work redesign issue: Prepare for “virtual teams” of agents across functions and define ownership for quality and accountability.

Why this conversation matters for AI leadership and workforce transformation

This conversation matters because it connects emerging technology to operational reality. Welsch frames agentic AI as more than a new feature set: it is a change in how work is specified (goals vs. instructions), executed (iterative collaboration), and governed (review and accountability).

It also speaks directly to workforce transformation. As autonomy increases, the value of human work shifts toward judgment, supervision, and the tasks that previously could not be prioritized due to “busy work.” Welsch encourages leaders at every level—board, executives, middle management, and individual contributors—to build readiness now.

Finally, the discussion underscores that speed and accessibility raise the stakes. With agentic AI arriving through major vendors and startups alike, organizations that treat enablement and workflow governance as core leadership responsibilities are better positioned to adopt responsibly.

Conclusion

AI leadership in the era of agentic AI requires more than enthusiasm for new tools. Andreas Welsch’s perspective centers on a practical shift: teams can increasingly delegate goals to AI systems, but leaders must redesign workflows for supervision, build trust through review, and enable the workforce through training and communities of practice.

As autonomous capabilities expand—from single agents to “virtual teams” across functions—the organizations that treat AI adoption as a leadership and workforce transformation program will be better positioned to capture value while maintaining accountability.

About the Author

FAQ

What does AI leadership mean in practical terms?

AI leadership means guiding strategy, governance, and day-to-day adoption so AI improves outcomes without sacrificing accountability. In this discussion, it includes hands-on familiarity with tools, clear workflow oversight, and ensuring people can validate AI outputs before acting on them.

What is agentic AI and why does it change leadership expectations?

Agentic AI refers to systems that can pursue a goal through iterative steps, rather than executing only explicit instructions. Andreas Welsch describes this as a paradigm shift: leaders must define goals and checkpoints, then supervise whether outcomes are correct and usable.

Where can organizations start using agentic AI safely?

Organizations can start with repeatable, high-volume workflows where humans stay in the loop. Welsch points to customer service: agents can triage questions, retrieve relevant documentation, and draft responses, while people review, edit, and approve what gets sent.

How should leaders think about human-in-the-loop oversight?

Human-in-the-loop oversight means designing workflows so people review AI outputs for correctness, completeness, and appropriateness before decisions or communications are finalized. Welsch compares this to managing a team: leaders assess interim results and decide what needs refinement.

Is prompt engineering training enough for AI adoption?

Prompt engineering training is a useful start, but it is not sufficient for sustained adoption. Welsch notes that organizations also need hands-on exercises, ongoing dialogue about what works, and internal multipliers—often built through communities of practice across roles.

What workforce changes should executives expect from agentic AI?

Executives should expect work to shift toward supervision, judgment, and higher-value tasks as agents take on busy work. Welsch frames the opportunity as using freed capacity for tasks teams could not reach before, while maintaining accountability through review and governance.

How can leaders build organizational readiness for agentic AI?

Leaders can build readiness by combining foundational AI education with hands-on experimentation and structured peer learning. Welsch describes a pattern of training plus communities of practice, where early adopters become advocates and multipliers who spread practical know-how across teams.

What does “autonomous transformation” imply for governance?

Autonomous transformation implies more work is executed by systems with greater autonomy, increasing the need for clear ownership and review. Welsch anticipates “virtual teams” of agents within and across departments, making workflow governance and quality checkpoints central leadership tasks.

How should boards and executives approach AI leadership together?

Boards and executives should align on opportunity, risk, and public perception while ensuring leaders are hands-on enough to make informed decisions. In this conversation, Welsch emphasizes practical adoption and oversight, while Dr. Palmer highlights balancing efficiency goals with brand impact.